Arm brings new compute options from the cloud to the edge

In context: If y'all had told me even just a few years back that Arm would exist creating designs that could compete in high operation computing (HPC) and other super enervating applications, I probably wouldn't have believed you lot. Later all, Arm is primarily known for the power efficiency of its designs—hence it's enormous success in smartphones and other battery-powered devices.

Sure, the performance in Arm-based smartphone chips, such as Apple'due south A serial for iPhones, Qualcomm's Snapdragon line for Android devices, and others take been improving dramatically over the past few years, but there'south a big gap between smartphones and HPC.

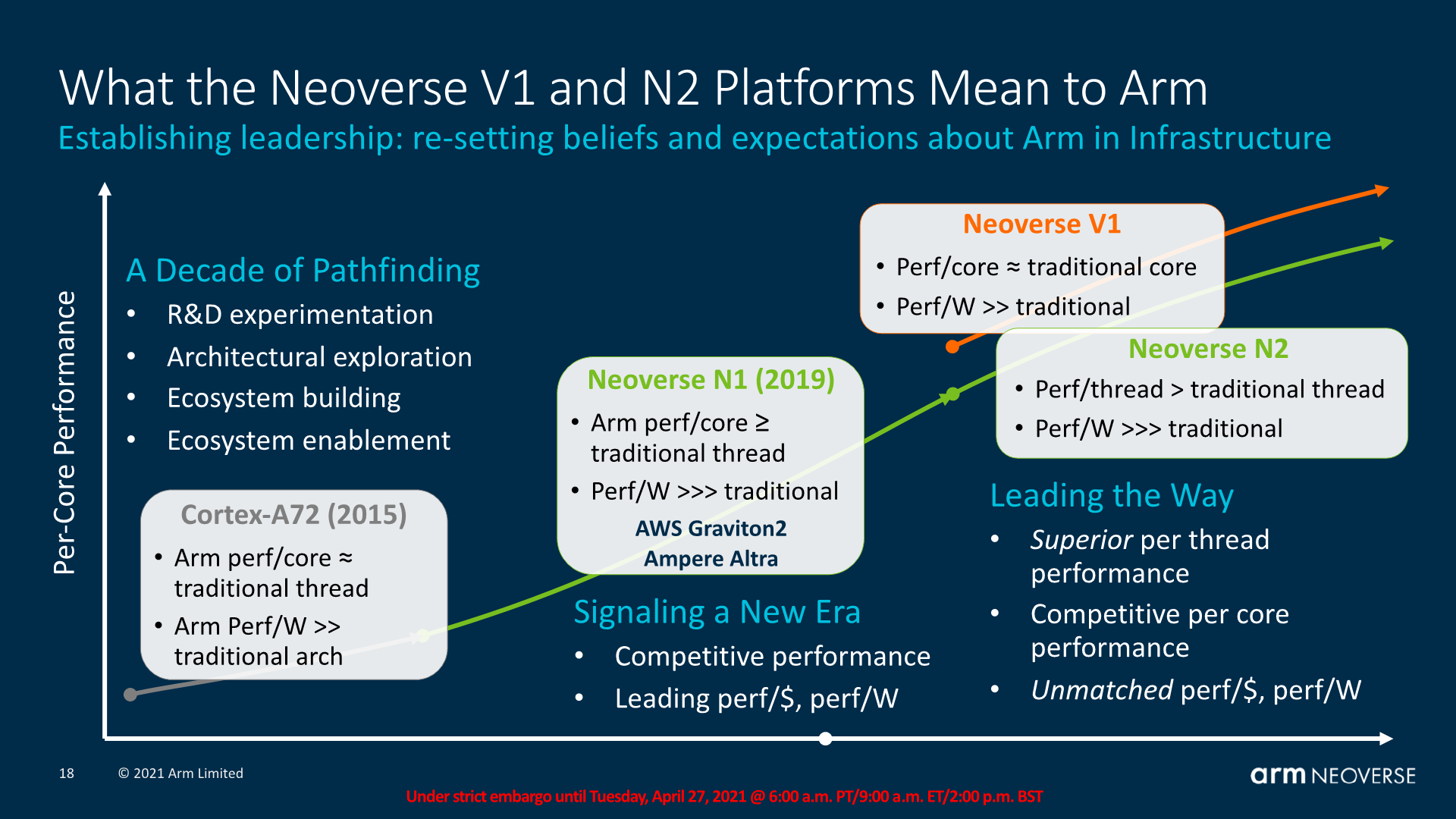

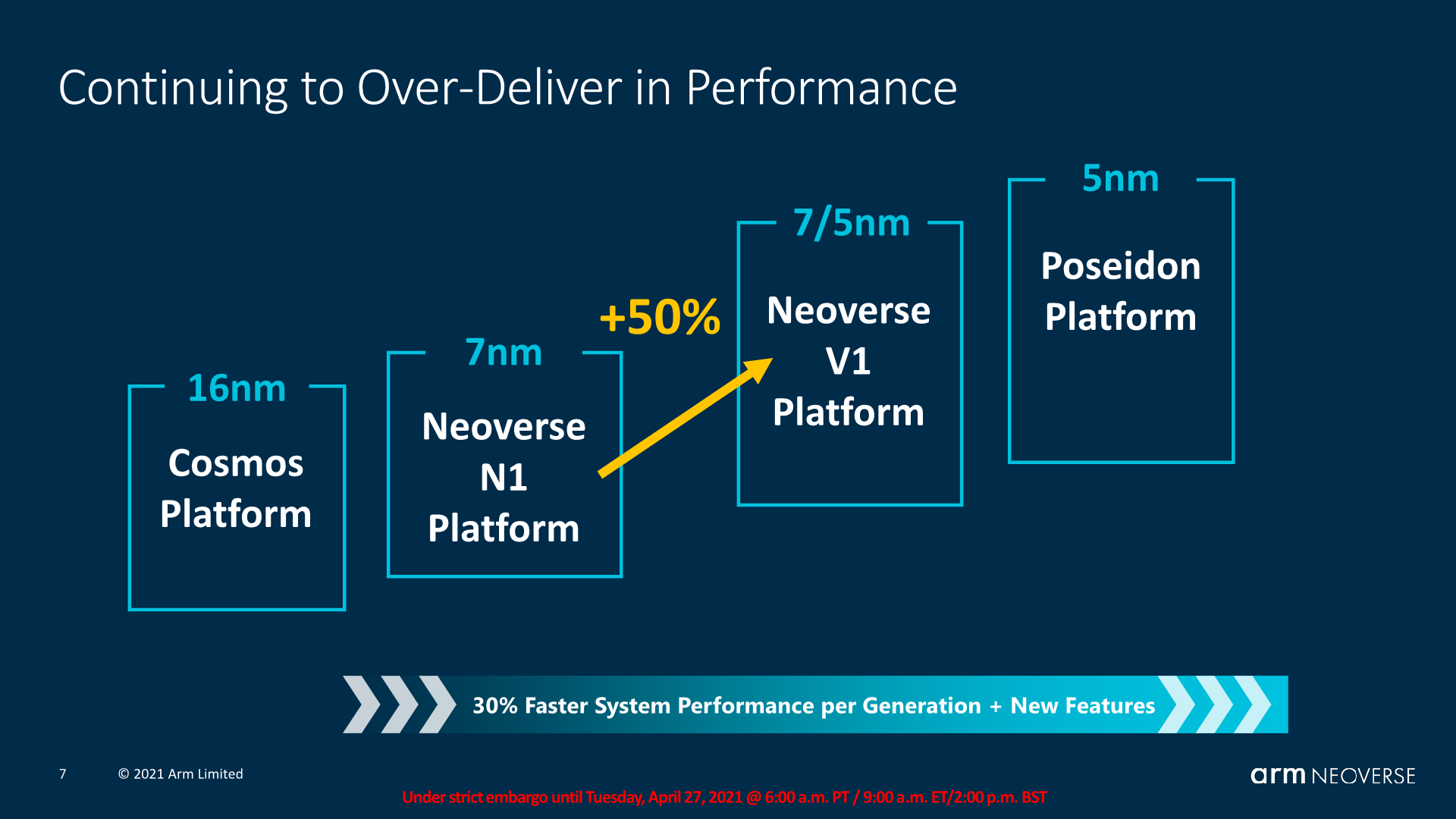

However, things started to change when Arm unveiled its Neoverse N1 platform for cloud and datacenter-focused applications back in 2022. With the debut of the N1 CPU design, the visitor signaled strongly to its partners and the computing world overall that information technology was serious about making the motility into the server market.

The effort notched a number of notable pattern wins, including AWS' Graviton processor, which Amazon is at present using -- along with its successor the Graviton 2 -- for an increasingly broad range of different workloads. Still, much of the early on efforts and successes focused on the power-efficiency of the Arm-based designs for deject data centers—an important, just oftentimes disregarded factor in those environments.

Earlier this week, however, Arm farther extended its Neoverse family with the launch of the V1 platform, which is targeted towards high-performance applications. The company says the V1 offers an impressive 50% improvement in instructions per clock (IPC), a 2x increase in vector performance, and a 4x jump in machine learning performance versus the original N1.

Part of the fashion Arm is achieving these new performance metrics is through the addition of Scalable Vector Extensions (SVE), which essentially enables custom instructions that can handle information blocks of any length to be added to CPU designs. More importantly, it allows code written for i type of vector length to be capable of running on hardware that may have a unlike vector length, thereby improving the flexibility and portability of the software involved.

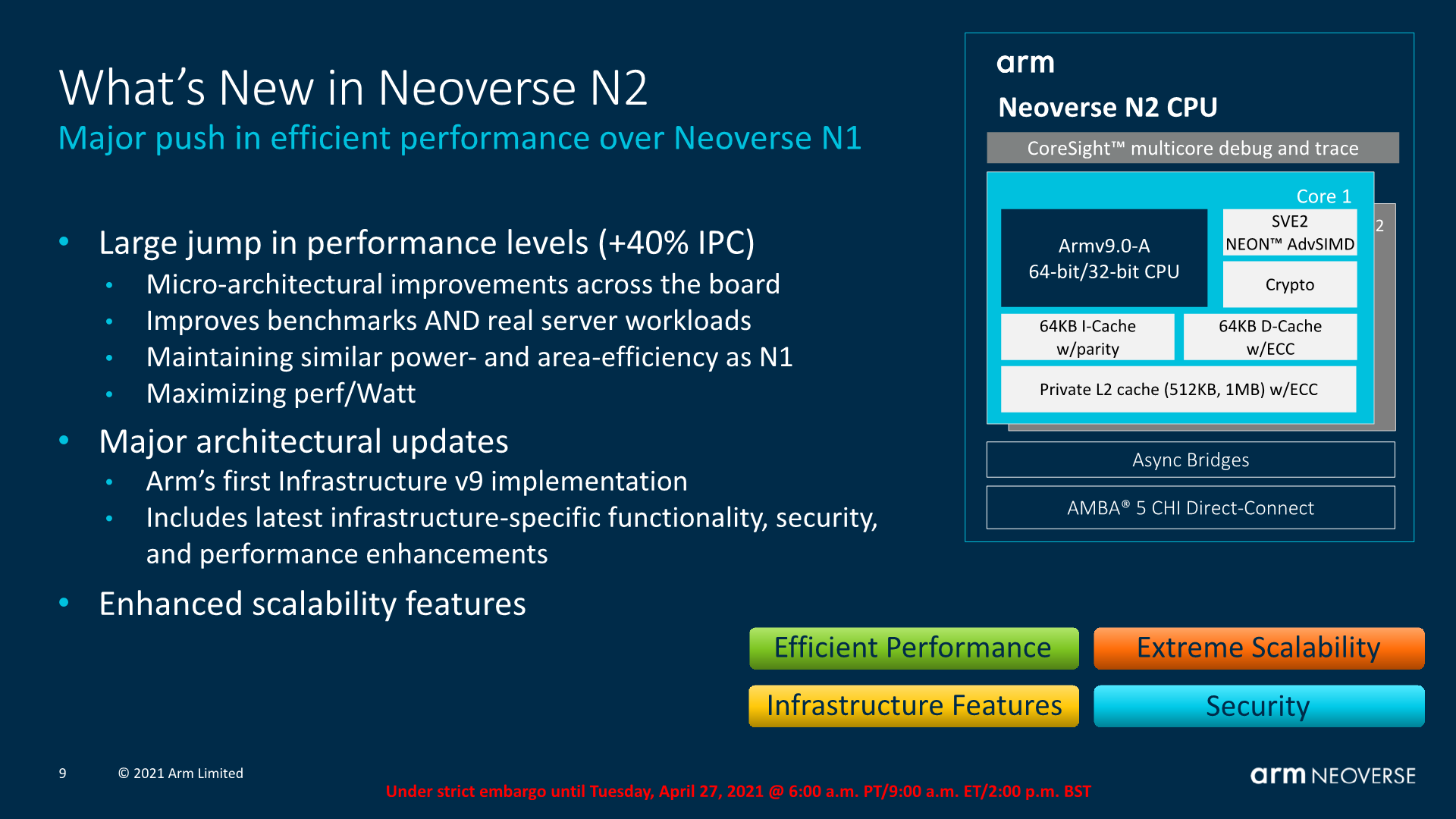

Neoverse N2 - Arm'south offset Armv9 Infrastructure CPU

In addition to the V1, Arm besides unveiled the 2nd generation N2, which is based on the same performance/watt-focused blueprint of the original N1, just with a large range of microarchitectural enhancements. Notably, the N2 is also the showtime Arm CPU design to leverage their V9 architecture (see "Arm Lays Out Vision for Next Decade of Fries" for more than). Because of that new underlying compages, information technology adds back up for SVE2, the 2nd generation of Arm'south Scalable Vector Extensions.

All of this translates into a claimed 40% improvement in performance versus the original N1, while maintaining the lower power consumption and thermals of the N1. As important, it provides proof of Arm's intention to proceed building out and advancing its range of server-focused chip designs. With the two new fleck architectures, companies like Ampere and other Arm partners tin choose to create datacenter focused SoCs that are either more full-bodied on operation (but consume more power) or are more concentrated on energy efficiency, while still providing improved speeds.

All of this translates into a claimed twoscore% improvement in operation versus the original N1, while maintaining the lower power consumption and thermals of the N1.

A disquisitional only easy-to-overlook function of Arm'southward announcements is the debut of their CoreLink CMN-700 Coherent Mesh Network for use in conjunction with these new CPUs. Congenital to enable more than flexible chiplet-fashion designs, the CMN 700 provides the critical loftier-speed data connections between components on an SoC.

For example, semiconductor makers who want to take more options for connecting custom accelerators, likewise as advanced forms of retention and storage, tin leverage Arm's mesh network engineering science to do so. CoreLink CMN-700 now includes support for the CXL (Compute Express Link) and CCIX (Cache Coherent Interconnect for Accelerators) standards, besides as providing gateways that can link these buses to Arm'due south ain AMBA 5 CPU interconnect bus. The bottom line is a significantly more than flexible range of design options that volition permit chip designers more easily piece together specialized parts using Lego cake-like chunks of functionality.

Practically speaking, these capabilities allow companies like Marvell to employ these new Arm technologies in products like their side by side-generation DPUs (Data Processing Units) for use in 5G and other loftier-speed networking applications, besides equally Oracle for their cloud infrastructure.

Because of the nature of their design process and the role they play in the industry, many of these new Arm innovations won't exist in existent earth applications until 2022 and beyond. Still, information technology's good to see the company pushing the boundaries of cloud calculating and edge computing operation across their original goals and it will be interesting to see where and how far their partners take these capabilities.

Bob O'Donnell is the founder and master analyst of TECHnalysis Research, LLC a technology consulting firm that provides strategic consulting and market enquiry services to the technology industry and professional person financial community. You tin follow him on Twitter @bobodtech.

Source: https://www.techspot.com/news/89478-arm-brings-new-compute-options-cloud-edge.html

Posted by: irvinlosom1936.blogspot.com

0 Response to "Arm brings new compute options from the cloud to the edge"

Post a Comment